Understanding the intricacies of a deep learning model can be challenging: whether it’s the nuanced handling of Grok’s politics by xAI, addressing sycophancy in ChatGPT, or dealing with typical hallucinations, navigating through a neural network with billions of parameters is no small feat.

Guide Labs, a San Francisco-based startup led by CEO Julius Adebayo and chief science officer Aya Abdelsalam Ismail, has presented a solution. They have open-sourced an 8 billion parameter LLM, Steerling-8B, with a new architecture aimed at clarifying its operations: each token output by the model can be traced back to its origins in the training data.

This feature can range from tracing the sources of factual references mentioned by the model to analyzing its understanding of humor or gender.

Adebayo explained the complexity: “If I encode gender in 1 billion out of a trillion ways, you need to identify all those instances and manage them reliably. Current models can do this, but it’s fragile … It’s a quest we aim to address.”

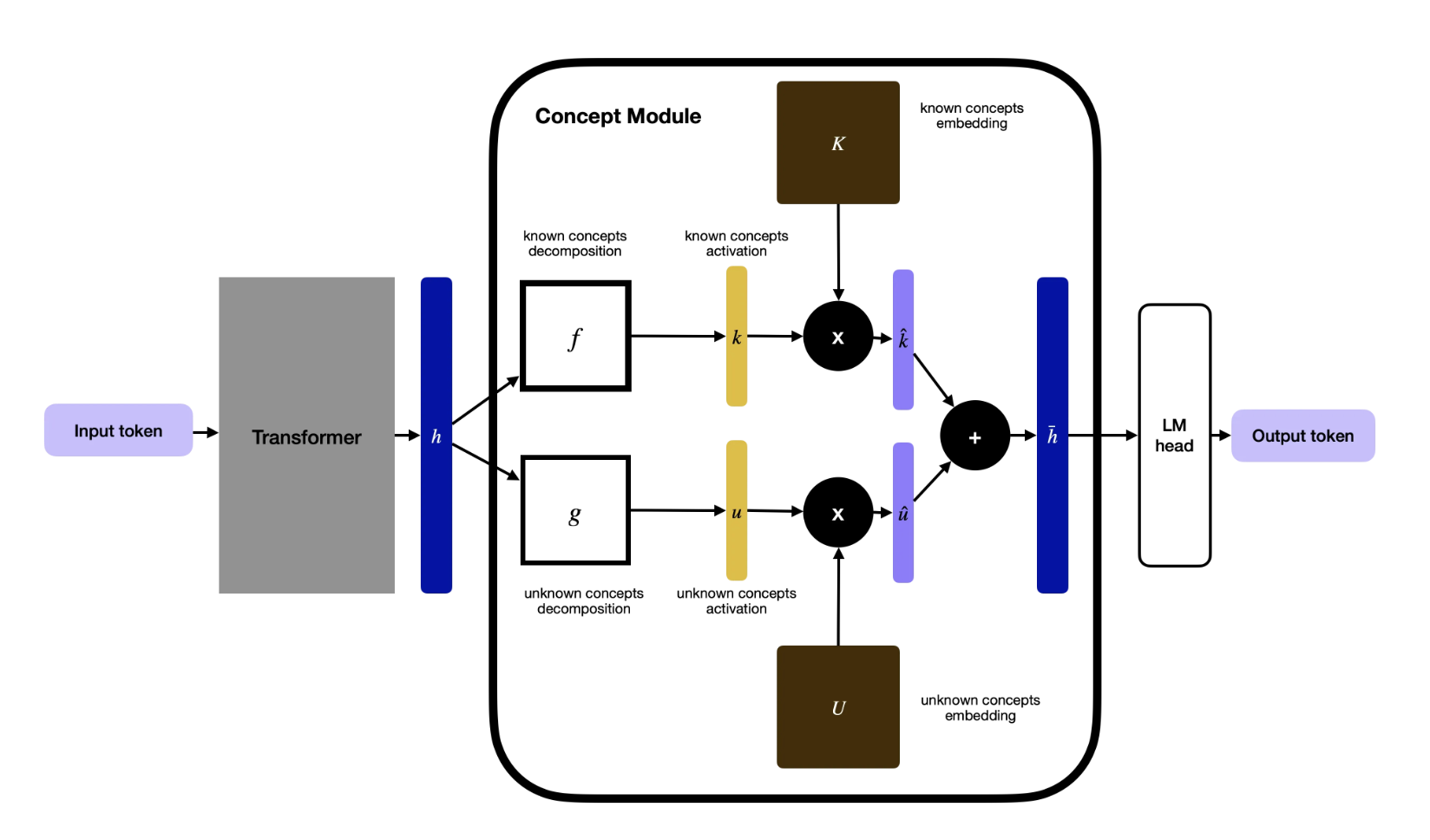

Adebayo’s journey began during his PhD at MIT, culminating in a significant 2018 paper proving the unreliability of existing deep learning understanding methods. This led to a new method of building LLMs, incorporating a concept layer that categorizes data into traceable segments. While this demands more initial data annotation, they harnessed other AI models to assist, resulting in their largest model to date.

“The prevalent interpretability method is akin to neuroscience for models, but we take a different approach,” Adebayo noted. “We build models such that neuroscience isn’t required.”

An associated concern is the potential reduction of emergent behaviors that give LLMs the ability to generalize beyond their training. However, Adebayo assures that their model still discovers new concepts on its own, like quantum computing.

Adebayo believes this interpretable design is essential across sectors. For consumer LLMs, these methods can prevent using copyrighted material or manage outputs on sensitive topics like violence. In regulated fields like finance, models assessing loan applicants must focus on financial records rather than race. Scientific sectors also benefit, such as in protein folding, where understanding underlying model decisions is crucial.

“This model shows that training interpretable models is now an engineering issue, not just science,” Adebayo asserted. “We’ve refined the science, allowing scalability without losing performance compared to advanced models with more parameters.”

Guide Labs claims Steerling-8B achieves 90% of existing models’ capabilities while utilizing less training data, attributed to its innovative architecture. Following its emergence from Y Combinator and securing a $9 million seed from Initialized Capital in November 2024, the next phase involves expanding the model and providing API and agentic access to users.

“The current model training approach is rudimentary. Democratizing innate interpretability will be beneficial in the long run,” Adebayo told TechCrunch. “As models evolve to super-intelligent levels, having them make inscrutable decisions independently could be concerning.”