Meta plans to introduce a similar feature for its chatbots later this year.

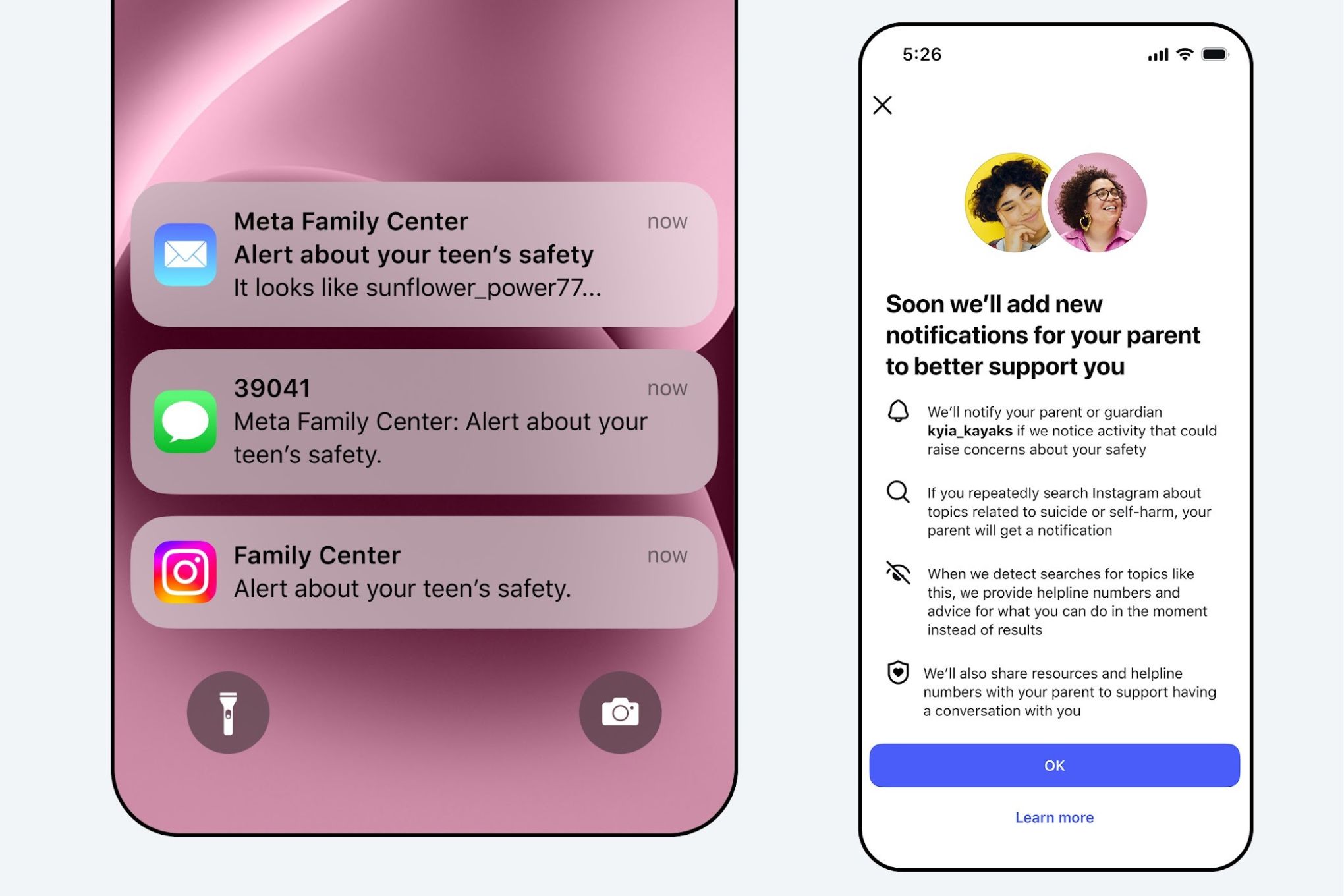

Starting next week, Instagram will alert parents if their teen searches for terms related to self-harm or suicide. Meta states that a similar alert system for AI chatbots will be launched later this year.

The feature alerts parents when their child repeatedly searches for terms linked to suicide or self-harm in a short time frame. It will initially roll out in the US, UK, Australia, and Canada for parents and teens who opt-in to supervision, with plans to expand globally later this year.

Instagram emphasizes that most teens do not search for content related to suicide or self-harm, and such searches are blocked and redirected to support resources. The aim is to empower parents to intervene if needed while avoiding excessive notifications that might diminish their effectiveness.

Parental alerts will be sent via email, text, or WhatsApp, in addition to in-app notifications offering guidance on discussing sensitive topics with their child.