Following widespread complaints that Facebook has become an “AI slop hellscape,” Meta announced new tools to detect impersonation and updated guidelines defining “original content.”

Last year, the company initiated a crackdown on spammy and unoriginal content to promote original creator content on its feeds and combat low-quality posts affecting its reputation.

This focus is crucial for Facebook’s success as a creator platform. If AI-generated content overwhelms original voices and affects monetization, creators might look elsewhere.

Meta revealed that its efforts doubled views and time spent on original content on Facebook in the second half of 2025 compared to the same period the previous year.

Progress has also been made in removing impersonators, with 20 million accounts eliminated last year and a 33% decline in impersonation reports targeting prominent creators.

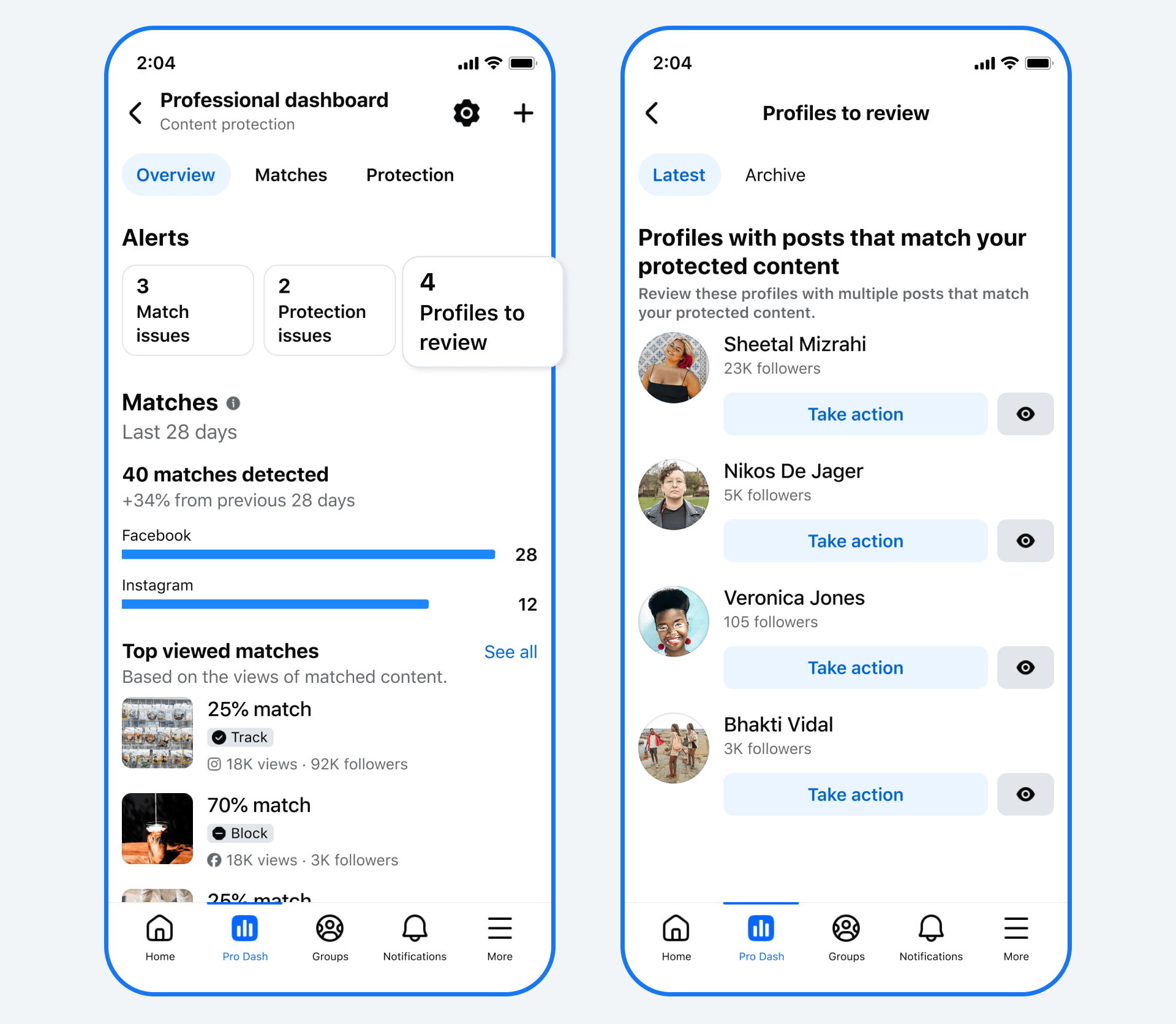

Facebook is now testing improvements to its content protection tools, enabling creators to take action when their reels are published by impersonators across platforms. The forthcoming update seeks to streamline the reporting process.

However, the current tool focuses on matching duplicate content, not unauthorized use of a creator’s likeness, which needs addressing.

Meta isn’t alone in dealing with AI’s impact. YouTube announced it will expand AI deepfake detection tools to politicians, public figures, and journalists.

As part of the changes, Meta is updating Facebook’s content guidelines to clarify “original” content, which includes creator-produced content and reels remixing other content or presenting new analyses and discussions. Minor edits on creators’ work or duplicative content will be deprioritized, meaning re-uploads or slight changes like borders won’t differentiate unoriginal content from its source.