**SaaS is dead! Long live SaaS.**

In 2026, AI is freeing builders and creators, allowing for sophisticated development in shorter timeframes. This is thanks to advanced AI agents and open source software that expedite the building process.

**The “BYO Internet” Era of AI**

This AI era is reminiscent of early internet days, when everything was built from scratch. Today’s digital creators are on a similar journey. Building leads to understanding actual workflows, which AI can then automate. AI agents might eventually replace these workflows completely.

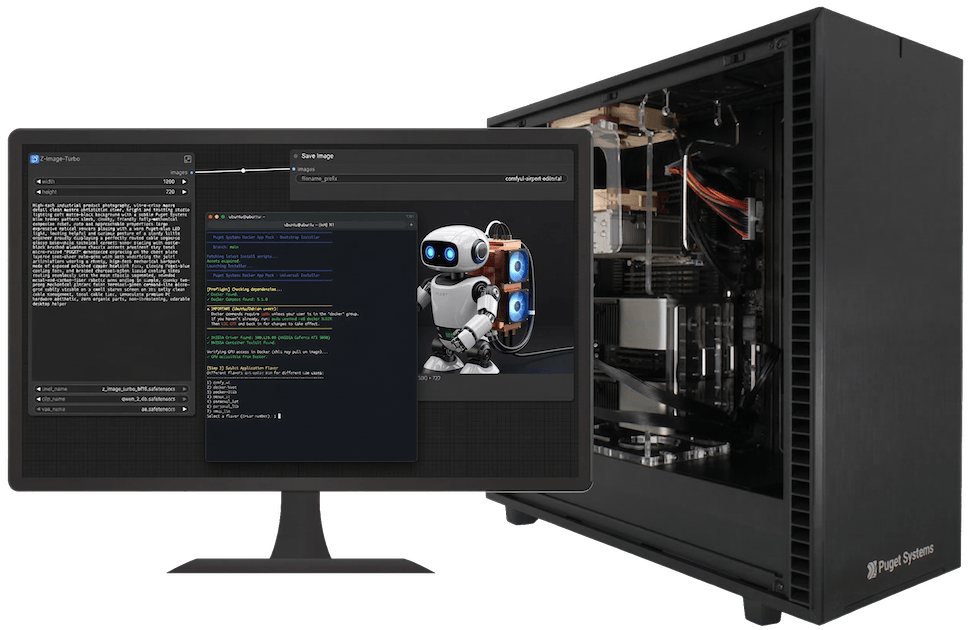

At Puget Systems, we see AI through our customers’ eyes and work to deliver on its promises. Our Puget Systems Docker App Packs are built in response to this and will soon be supported by a series of articles to aid in crafting strategies and building solutions on our AI workstations.

**Hardware Deep Dive: Threadripper PRO and the AI Workstation**

To harness this era, sturdy local compute is vital. The AMD Threadripper™ PRO architecture is perfect for AI development, offering essential PCIe lanes and memory bandwidth for data flow.

Puget Systems’ AI product lines align with our Docker App Packs, constructed for today’s demands. They efficiently process data with Threadripper PRO’s PCIe lanes and VRAM pools.

**The DEV Host Machine (Rocky Linux)**

Our parent machine specs include an AMD Ryzen™ Threadripper™ PRO 5995WX, Rocky Linux, dual NVIDIA GeForce RTX™ 5090 GPUs, 128GB RAM, and 1.9TB SSDs.

**The Virtualized AI Environment (Ubuntu)**

The virtual environment leverages QEMU virtualization, Ubuntu OS, and dual RTX 5090 GPUs.

**The 1 to 2 GPU Time Chasm**

Starting with AI is easy, but scaling from one to two GPUs is challenging. Docker solutions for single GPUs falter in multi-GPU contexts.

**The Illusion of Success**

Initial successes with Ollama and Deepseek models led to realization of the system’s limitations. Pivoting to vLLM solved multi-GPU issues but required setting models manually.

**Introducing the App Packs**

Our App Packs, available on GitHub, provide reliable bases for setting up applications. Each serves specific needs, from general development to creative and team LLMs.

**Getting Started is a One-Liner**

To configure AI on Puget Systems, a simple command in Ubuntu sets up Docker and the necessary stack.

**Long Live SaaS!**

The SaaS model is evolving with AI, requiring integration with API-driven ecosystems. Organizations become AI companies as they deploy models locally, avoiding long-term dependency on cloud providers.

Our App Packs enable local deployment, striking a balance between local control and cloud service advantage.

Stay tuned for more articles on benchmarking and building AI with our tools. Your feedback is welcome.