Nvidia CEO Jensen Huang was ahead of the market by directing the company to develop AI-specific chips in 2010, long before the current AI buzz. In 2020, a strategic acquisition to enhance data center networking led to one of Nvidia’s fastest-growing and lucrative divisions. Nvidia’s networking division has become the company’s second-largest revenue driver behind computing. Last quarter, it generated $11 billion, a 267% year-over-year increase, and more than $31 billion for the year, according to Nvidia’s earnings.

Propelled by AI processing growth, the division includes NVLink for GPU communication in data center racks, Nvidia InfiniBand Switches, Spectrum-X for AI networking, and co-packaged optics switches. Together, these technologies help build an “AI factory” data center for training AI models.

Kevin Cook, a Zacks Investment Research strategist, noted that Nvidia’s networking business is impressive, reporting $11 billion in one quarter, outperforming Cisco’s annual networking business. Yet, it doesn’t garner the attention of Nvidia’s larger chip or gaming businesses.

Nvidia’s networking business originated from Mellanox, acquired in 2020 for $7 billion. Kevin Deierling, a senior VP of networking at Nvidia, joined from Mellanox. He mentioned how some may not see the importance of networking beyond basic connectivity, but emphasized its foundational role in computing. Deierling didn’t initially understand the acquisition of Mellanox but acknowledged it now complements the GPU business.

Cook added that Mellanox was seen as the missing piece to make GPUs a comprehensive package. Deierling believes the success stems from selling tech as full-stack solutions via partners, not individually.

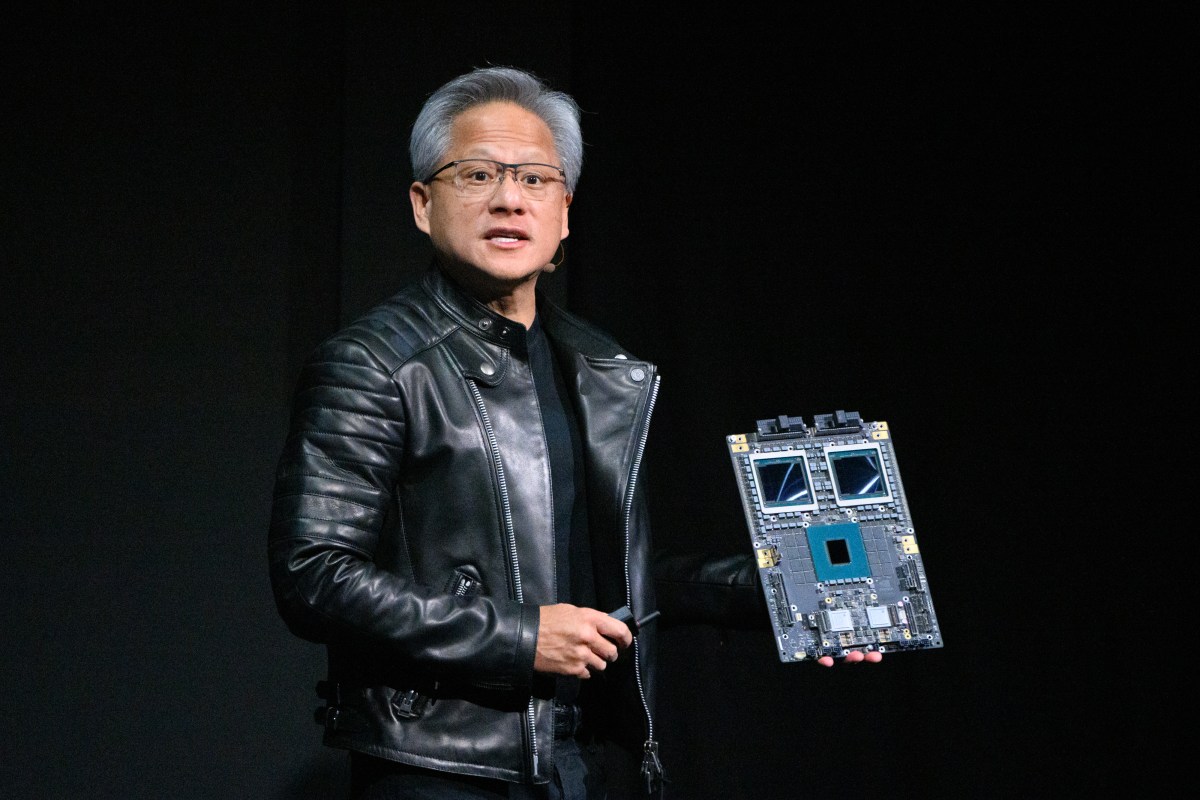

Nvidia announced updates to its networking system during Huang’s keynote at Nvidia GTC, launching the Nvidia Rubin platform with six new chips for an “AI supercomputer,” a new Nvidia Inference Context Memory Storage platform, and more efficient Spectrum-X Ethernet Photonics switches.

Networking is now crucial, acting as the backbone of the AI factory.