On Tuesday, Google released a research blog post detailing a new compression algorithm for AI models, causing a drop in memory stocks. Within hours, Micron fell 3 per cent, Western Digital lost 4.7 per cent, and SanDisk decreased by 5.7 per cent, as investors reconsidered the physical memory requirements of the AI industry.

The algorithm, TurboQuant, targets a costly bottleneck in operating large language models: the key-value cache, a high-speed data store retaining context information to prevent recomputation for each new token generated. As models handle longer inputs, the cache expands rapidly, using GPU memory that could otherwise serve more users or operate larger models. Google claims TurboQuant compresses the cache to 3 bits per value, from the typical 16, cutting its memory usage by over six times without significant accuracy loss according to their benchmarks.

Set for presentation at ICLR 2026, the paper was authored by Amir Zandieh, a Google research scientist, and Vahab Mirrokni, a vice president and Google Fellow, alongside collaborators from Google DeepMind, KAIST, and New York University. It builds on two earlier papers: QJL, released at AAAI 2025, and PolarQuant, due for AISTATS 2026.

How it works

TurboQuant’s key innovation is eliminating the overhead that hampers the effectiveness of most compression techniques. Traditional quantization methods reduce data vector sizes but need to store additional constants, normalization values necessary for decompressing data accurately. These typically add one or two extra bits per number, partially negating the compression.

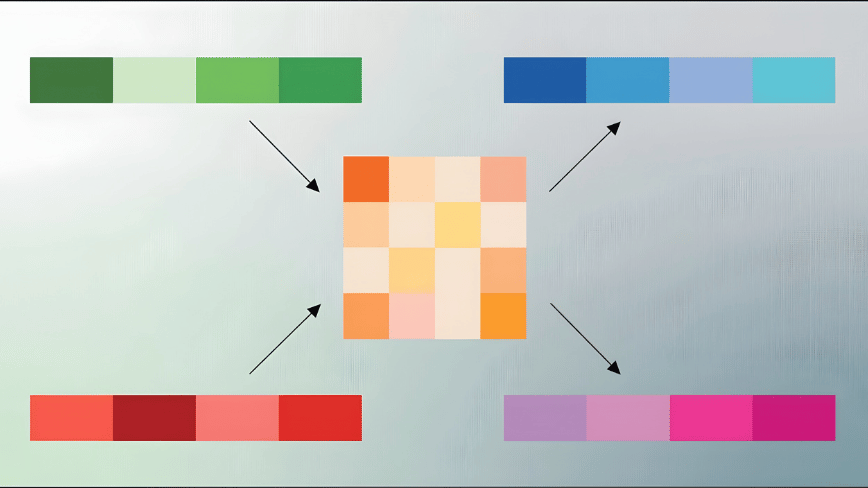

TurboQuant sidesteps this through a two-stage process. The first stage, PolarQuant, transforms data vectors with standard Cartesian coordinates into polar coordinates, dividing each vector into a magnitude and a set of angles. As the angular distributions are predictable and concentrated, the system avoids the costly per-block normalization step. The second stage uses QJL, based on the Johnson-Lindenstrauss transform, which reduces the small residual error from the first stage to a single sign bit per dimension. This creates a representation that mainly uses its compression budget on capturing the original data’s meaning with minimal residual budget on error correction, avoiding overhead on normalization constants.

Google tested TurboQuant on five benchmarks for long-context language models, including LongBench, Needle in a Haystack, and ZeroSCROLLS, using open-source models from the Gemma, Mistral, and Llama families. At 3 bits, TurboQuant matched or surpassed KIVI, the standard baseline for key-value cache quantization published at ICML 2024. In needle-in-a-haystack retrieval tasks, testing a model’s ability to locate information in long passages, TurboQuant achieved perfect scores while compressing the cache six times. At 4-bit precision, it provided up to an eight-fold speedup in computing attention on Nvidia H100 GPUs versus the uncompressed 32-bit baseline.

What the market heard

The stock reaction was swift and considered excessive by some analysts. Wells Fargo analyst Andrew Rocha remarked that TurboQuant directly influences memory cost constraints in AI systems. If widely adopted, it raises questions about the actual memory capacity needed by the industry. However, Rocha and others cautioned that the demand for AI memory remains strong, and compression algorithms have long existed without significantly changing procurement volumes.

The concern has substance. AI infrastructure spending is rising rapidly, with Meta committing up to $27 billion in a recent deal with Nebius for dedicated compute capacity, and Google, Microsoft, and Amazon planning hundreds of billions in capital expenses on data centers through 2026. Though a technology that reduces memory needs by six times doesn’t reduce spending by six times, as memory is just one cost component of a data center, it alters the ratio. In such large-scale industry spending, even small efficiency gains quickly accumulate.

The efficiency question

TurboQuant enters the scene as the AI sector grapples with the economics of inference. While training a model is a significant one-time expenditure, operating it and managing millions of daily queries with acceptable latency and accuracy is the recurring cost dictating the scalability of AI products’ financial viability. The key-value cache is crucial to this equation, serving as the bottleneck limiting how many concurrent users a GPU can handle and the length of context a model can support.

Compression methods like TurboQuant are part