When it comes to deploying local LLMs, many assume that higher expenditure results in better performance, but this isn’t always true. That’s why Sipeed developed the “AI Agent Local LLM Inference Device Deployment Guide” on the llmdev.guide website.

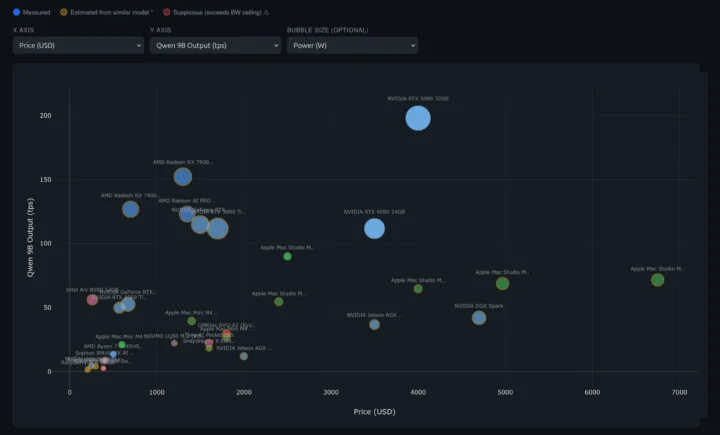

The site details various hardware options with price, performance (tokens/s), power consumption, and more for different LLMs. For instance, with Qwen3.5 9B, expensive setups like the NVIDIA DGX Spark or Apple Mac Studio M3 provide similar TPS compared to a $260 Intel Arc B580 12GB GPU.

If budget isn’t a concern, the NVIDIA GTX 5090 32GB offers high performance. However, price comparisons can be misleading as some reflect entire systems while others only include a GPU. For Qwen 122B-A10B, the NVIDIA DGX Spark provides the best price/performance compared to an Apple Mac Studio M3 Ultra 256GB, with fewer options due to the model’s large memory needs.

The guide offers various parameters for the X and Y axes and bubble size, including device memory bandwidth/capacity, claimed TOPS, LLM output and prefill, and ratios such as perf/watt and perf/dollar.

Benchmarking relies on Qwen 3.5 models:

– Qwen3.5-9B: Small device baseline

– Qwen3.5-27B: Mid-range device baseline

– Qwen3.5-35B-A3B (MoE): Optional performance reference

– Qwen3.5-122B-A10B (MoE): Large memory device reference

– Qwen3.5-397B-A17B (MoE): Flagship device reference

The site lacks a price filter but allows using a logarithmic scale for entry-level options, or you can zoom in on specific areas. A list view is available for sorting by price.

More details on devices, including specs and test results, are available by clicking on the list or chart bubbles. Some information is estimated, such as the Raspberry Pi 5 16GB’s Qwen 3.5 9B, extrapolated from Llama 7B results.

Hardware submissions are welcome, but the current process requires manual data entry. For new hardware, deploy the benchmark, follow the submission instructions, and run at least the Qwen 3.5 9B with a long query, then take a board photo. Automation could improve submissions, similar to the sbc-bench.sh script or using a wizard script.

The UP Xtreme ARL AI Dev Kit is pending submission due to manual data entry requirements. This resource is valuable and could be enhanced further.