Sam Altman’s 13-page policy blueprint, ‘Industrial Policy for the Intelligence Age,’ suggests safety nets, containment strategies for rogue AI, and direct citizen dividends from AI growth. He described it to Axios as a starting point, not a prescription.

OpenAI has released a policy document proposing extensive economic changes to anticipate superintelligence, recommending taxes on automated labor, a national public wealth fund partly funded by AI companies, and trials of a 32-hour workweek.

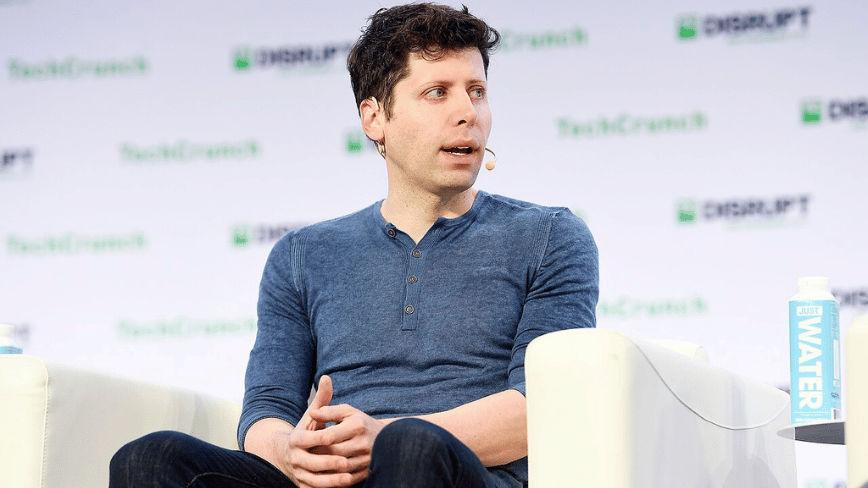

The document, titled ‘Industrial Policy for the Intelligence Age: Ideas to keep people first,’ emerged as Congress considers AI legislation. CEO Sam Altman, in an exclusive Axios interview, compared the AI changes to the Progressive Era and the New Deal, noting cyberattacks and AI-enabled biological weapons as immediate threats.

The document’s boldest proposal is the public wealth fund. OpenAI suggests a nationally managed fund, partially funded by AI companies, to invest in AI firms and other tech businesses, distributing returns directly to Americans.

This model resembles Alaska’s Permanent Fund, providing annual dividends to residents from oil revenues.

On labor, the document suggests taxes on automated labor and shifting tax focus from payroll to capital gains and corporate income, acknowledging AI’s potential impact on wage-and-payroll revenue for Social Security.

The 32-hour workweek proposal is described as an ‘efficiency dividend’ from AI-driven productivity gains.

The document discusses ‘containment playbooks’ for when dangerous AI systems become autonomous and replicable, proposing government coordination for such scenarios.

The blueprint also suggests automatic safety net triggers: when AI-driven displacement reaches certain levels, benefits like unemployment payments and wage insurance would automatically increase, then decrease when conditions stabilize.

Altman told Axios that a major AI-enabled cyberattack is ‘totally possible’ within the next year and using AI to create novel pathogens is ‘no longer theoretical.’

Altman was clear with Axios about the document’s dual nature. OpenAI is developing the technology it warns about while positioning itself as a responsible actor proposing solutions, a strategy to influence regulation before it is regulated. Anthropic has taken a similar approach.

The policy paper is released at a time when OpenAI is preparing for an IPO, closed a $110 billion private funding round, and faces scrutiny over its non-profit conversion.

Whether driven by altruism or strategy, Altman told Axios, ‘Some [effects] will be good. Some will be bad. But we feel a sense of urgency. We want to see these issues debated seriously.’