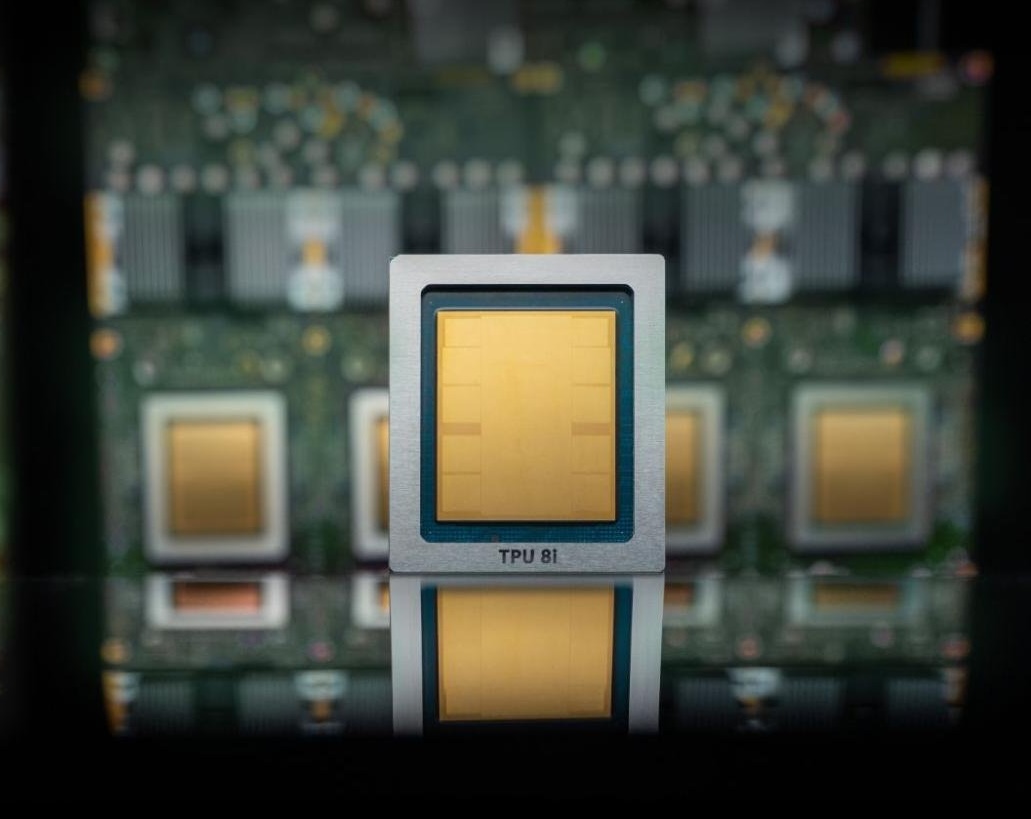

On Wednesday, Google Cloud revealed that its eighth generation of AI chips, known as tensor processing units (TPUs), will be divided into two categories. The TPU 8t is designed for model training, while the TPU 8i is targeted for inference.

Inference refers to the continuous use of models, specifically the process that occurs after users input prompts.

As expected, the company highlights remarkable performance improvements for these new TPUs over previous generations: up to three times faster AI model training, 80% better performance per dollar, and over a million TPUs functioning together in a single cluster. This promises significantly more computing power with reduced energy usage and cost for customers compared to earlier versions. These chips retain the TPU label, not GPU, as their custom low-power design was initially named Tensor.

Google’s chips don’t aim to entirely replace Nvidia yet. Like other major cloud providers, such as Microsoft and Amazon, Google uses these chips to augment Nvidia-based systems in its infrastructure without fully substituting them. Google has even announced that it will offer Nvidia’s latest chip, Vera Rubin, in its cloud services later this year.

Eventually, hyperscalers creating AI chips (Amazon, Microsoft, and Google included) might increasingly rely less on Nvidia, especially as enterprises migrate their AI requirements to these cloud services and adapt their applications to these chips.

Nevertheless, at present, it remains risky to underestimate Nvidia. Chip market analyst Patrick Moore humorously remarked on X that he predicted in 2016 that Google’s TPU could spell trouble for Nvidia (and Intel) when Google introduced its first TPU. Nvidia now boasts a market cap nearing $5 trillion, showing that prediction didn’t quite pan out.

If Nvidia’s strategy unfolds as intended, Google’s expansion as an AI cloud provider would mean more business for the chip manufacturer, even if many workloads utilize Google’s chips.

Additionally, Google has committed to collaborating with Nvidia to enhance computer networking that enables Nvidia-based systems to operate more efficiently in its cloud. Specifically, the two tech giants are collaborating to improve the software-based networking technology called Falcon, which Google developed and open-sourced in 2023 through the Open Compute Project.