Campbell Brown has dedicated her career to pursuing accurate information, initially as a TV journalist and later as Facebook’s news chief. Observing how AI is transforming information consumption, she believes history may repeat itself. This time, she’s proactive.

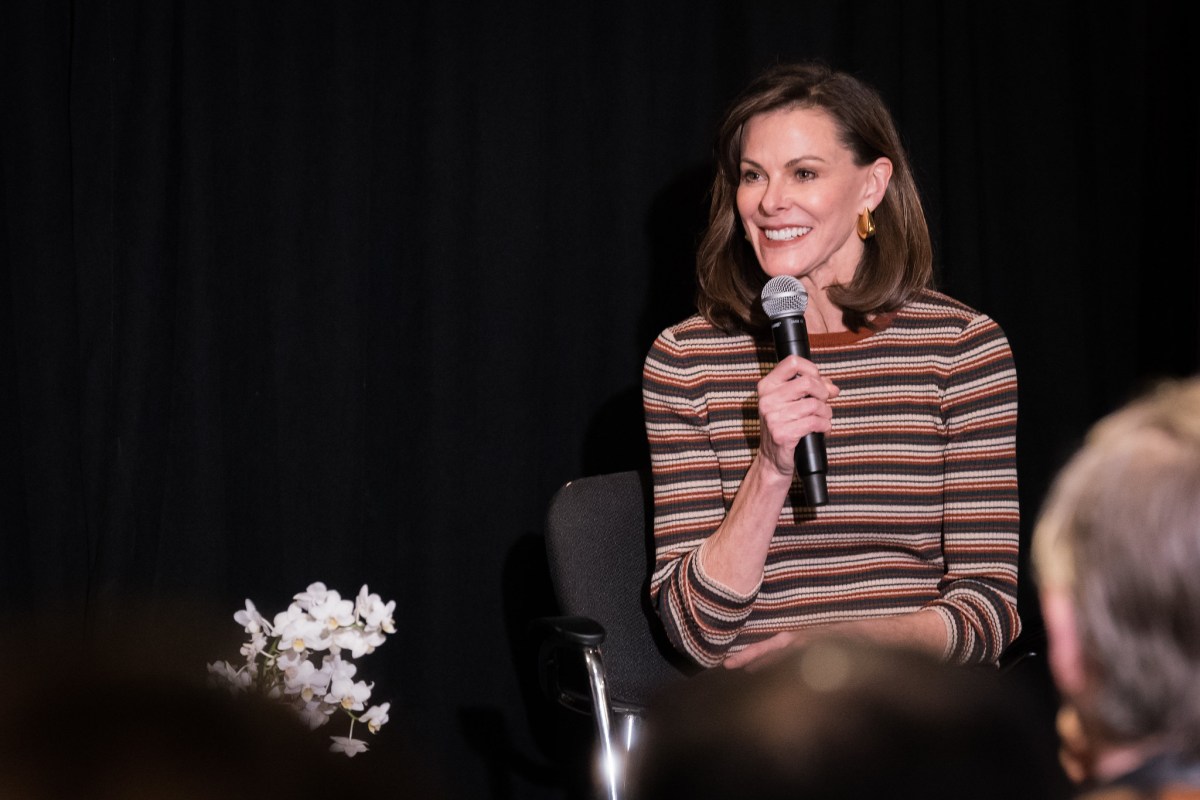

Her enterprise, Forum AI, assesses how foundation models handle “high-stakes topics,” such as geopolitics, mental health, finance, and hiring, where clear-cut answers are elusive. Brown discussed these efforts with TechCrunch’s Tim Fernholz at a StrictlyVC event in San Francisco.

The approach involves enlisting top experts to devise benchmarks and training AI judges to evaluate models on a large scale. For Forum AI’s geopolitics focus, Brown assembled a team including Niall Ferguson, Fareed Zakaria, former Secretary of State Tony Blinken, former House Speaker Kevin McCarthy, and Anne Neuberger, a cybersecurity leader from the Obama administration. The aim is for AI judges to agree with human experts 90% of the time, a target Forum AI claims to have achieved.

Brown traces Forum AI’s origin, founded 17 months ago in New York, to a pivotal moment. “I was at Meta when ChatGPT was first released publicly,” she said, realizing its future role as a primary information conduit. The prospect concerned her, especially for her children’s education, prompting her to address accuracy issues.

She found fault with foundation model companies’ priorities. “They focus heavily on coding and math,” she noted, suggesting news and information present greater challenges. Yet, she insists difficulty doesn’t excuse neglect.

Forum AI’s evaluations have not painted a reassuring picture. Brown highlighted Gemini drawing content from China’s Communist Party for unrelated stories and noted a consistent left-leaning bias in models. Other flaws include lack of context and straw-man arguments. “There’s a long way to go,” she said, though she believes straightforward solutions could markedly enhance outcomes.

Reflecting on her time at Facebook, she remarked on the perils of prioritizing the wrong metrics. “We failed at a lot of the things we tried,” she admitted, mentioning her now-defunct fact-checking initiative. Still, she believes AI offers a chance to change this.

“Currently, it could go either way,” she posited; companies might cater to consumer desires or opt for truthfulness. Although an ideal world where AI prioritizes truth may seem naive, she sees enterprise as a potential ally. Businesses relying on AI for critical decisions are concerned with accuracy, which aligns with Forum AI’s focus.

However, monetizing compliance needs poses challenges for Forum AI, as the market still favors superficial audits and standardized benchmarks Brown deems insufficient. The compliance landscape, according to her, is inadequate. New York City’s AI hiring bias law illustrated the issue, with many violations initially overlooked. Effective evaluation requires time and domain-specific knowledge—“smart generalists won’t suffice.”

Last autumn, Forum AI raised $3 million from Lerer Hippeau. Brown highlights the gap between AI’s self-perception and users’ experiences. While tech leaders claim AI will revolutionize the world, users frequently encounter errors and misinformation in chatbots.

Public trust in AI remains low, which Brown believes is often justified. “There’s a dissonance between Silicon Valley’s discourse and consumer reality,” she observed.