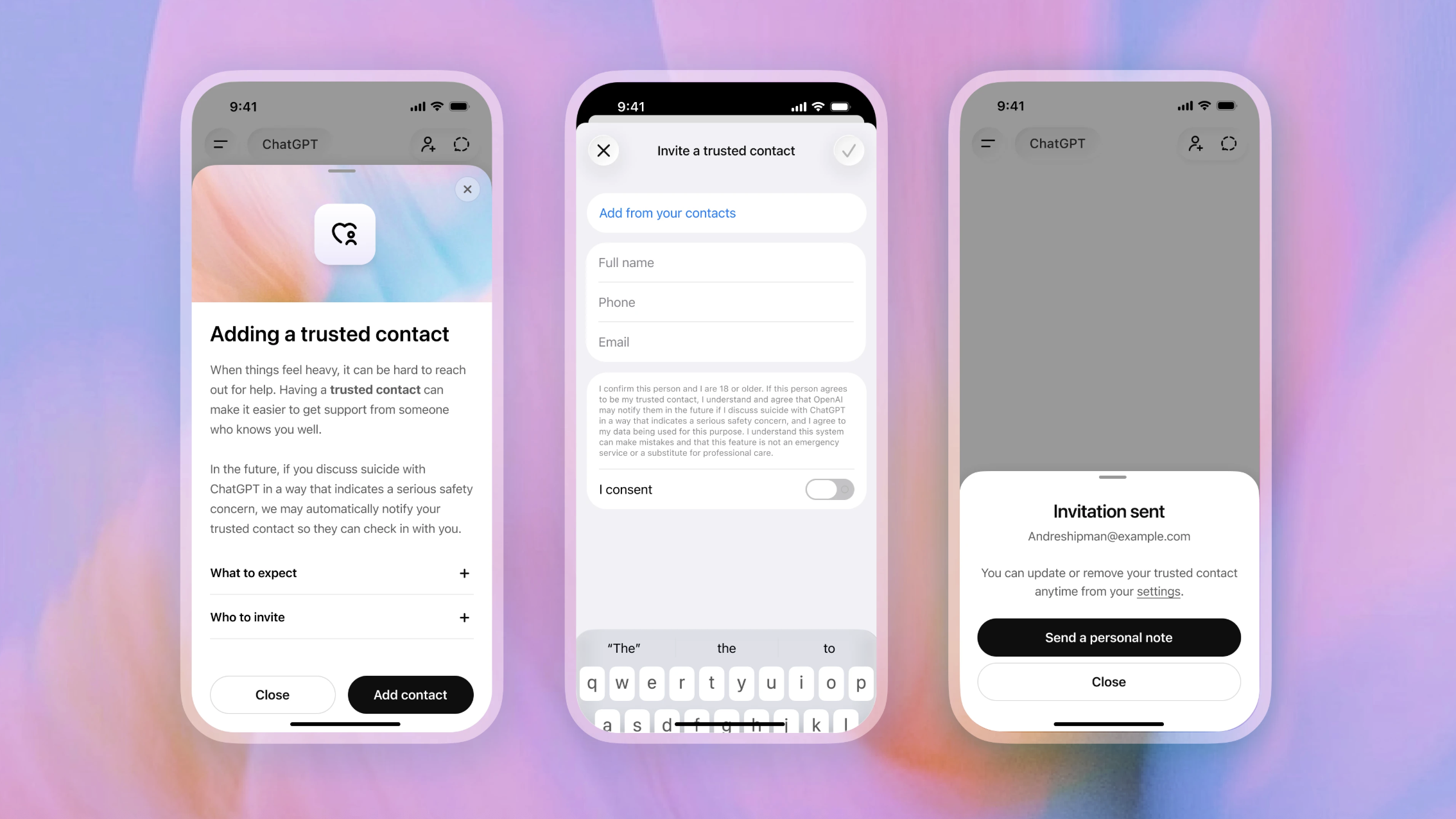

On Thursday, OpenAI revealed a new feature named Trusted Contact, aimed at notifying a trusted third party if conversations mention self-harm. This feature lets an adult ChatGPT user assign a trusted individual, such as a friend or family member, within their account. If a conversation suggests self-harm, OpenAI will urge the user to contact this individual and will also send an automated alert to the contact, prompting them to check on the user.

OpenAI is dealing with lawsuits from families of individuals who committed suicide after interactions with its chatbot. These families allege ChatGPT either encouraged their loved ones to commit suicide or assisted in planning it.

Currently, OpenAI employs a combination of automation and human oversight to address potentially harmful situations. Certain conversational triggers prompt the system to identify suicidal thoughts, which are then relayed to a human safety team. OpenAI asserts that each notification is reviewed by a human, aiming to address these alerts within one hour.

If the internal team assesses a situation as a serious safety concern, ChatGPT sends an alert to the trusted contact via email, text message, or an in-app notification. The alert is concise and encourages the contact to engage with the affected person while safeguarding user privacy by not detailing the conversation’s content.

The Trusted Contact feature builds on safeguards introduced last September, allowing parents some oversight over their teens’ accounts, including receiving safety notifications if the system senses a serious safety risk. ChatGPT has long included alerts recommending professional health services if discussions veer towards self-harm.

Trusted Contact is optional, and a user can have multiple ChatGPT accounts. Similarly, the parental controls introduced by OpenAI are optional.

“Trusted Contact is part of OpenAI’s broader initiative to create AI systems that support people in difficult times,” the company stated in the announcement. “We will continue collaborating with clinicians, researchers, and policymakers to improve AI responses when people may experience distress.”