YouTube announced on Tuesday that it is broadening the reach of its “likeness detection” technology, which identifies AI-generated content like deepfakes, to professionals in the entertainment industry. This technology is similar to YouTube’s Content ID system, used to detect copyright-protected materials in uploaded videos, enabling rights owners to request removal or share revenue. Likeness detection works for simulated faces and is designed to protect creators and public figures from unauthorized use of their identities, a frequent issue for celebrities in scam ads.

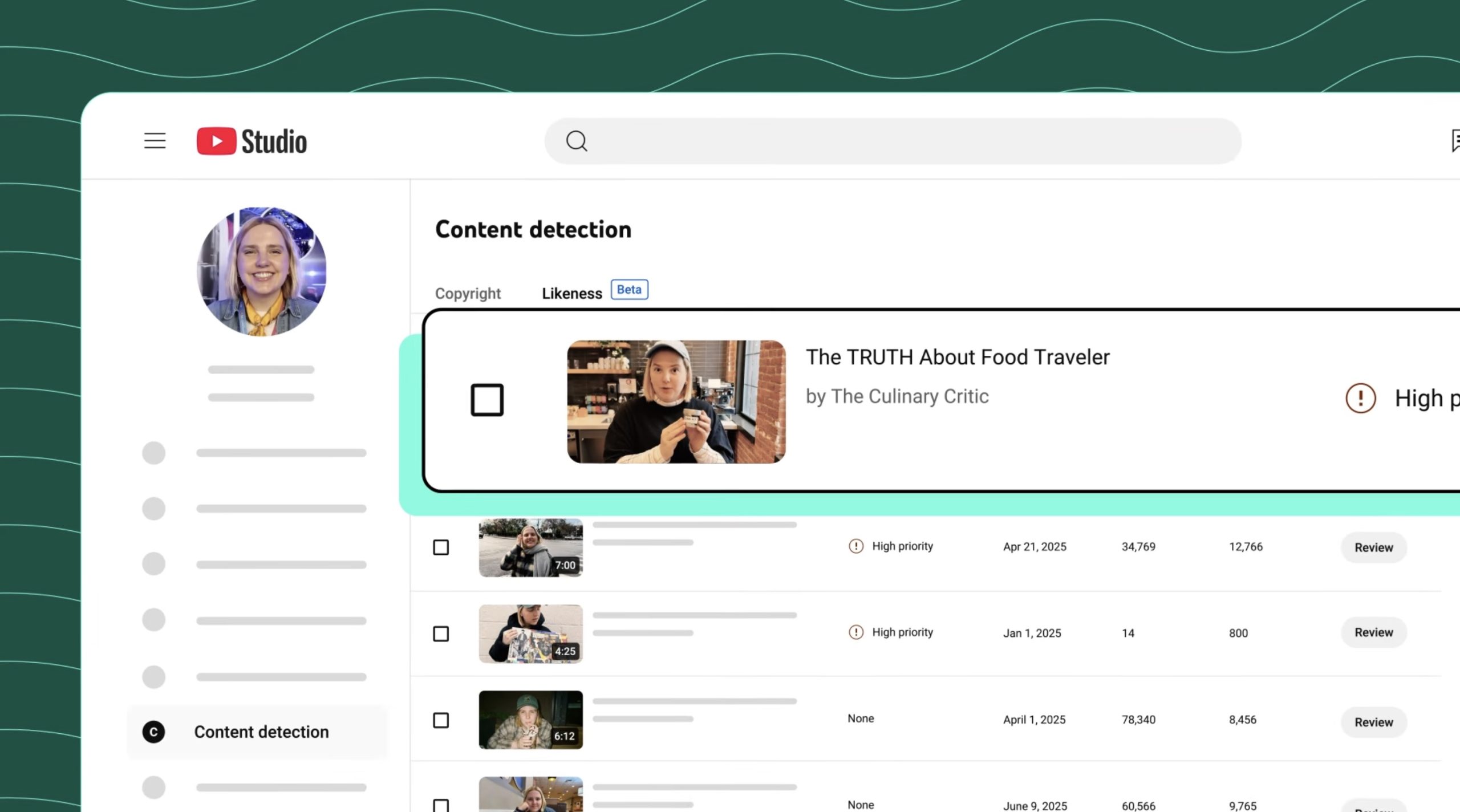

Originally launched to some YouTube creators in a pilot program last year, the technology expanded to include politicians, government officials, and journalists in spring. It is now available to those in the entertainment sector, such as talent agencies, management companies, and their represented celebrities. Agencies like CAA, UTA, WME, and Untitled Management have provided feedback on the tool. Entertainers are not required to have their own YouTube channels to use the likeness detection tool. The feature scans for AI-generated content to identify visual matches of a participant’s face, allowing users to request video removal for privacy policy violations, submit copyright removal requests, or take no action. YouTube clarifies it will not remove all content as it allows parody and satire.

The company plans to expand the technology to support audio in the future. Additionally, YouTube has been promoting similar protections at a federal level through support for the NO FAKES Act in Washington D.C., which aims to regulate AI’s unauthorized creation of an individual’s voice and visual likeness. YouTube has not disclosed the number of AI deepfake removals managed by the tool to date but mentioned in March that removals were still few.